Encoded & Multilingual Data Review – ыиукшв, χχλοωε, 0345.662.7xx, Is Qiokazhaz Spicy, Lotanizhivoz, Food Named Dugainidos, Tinecadodiaellaz, Ingredients in Nivhullshi, Pouzipantinky, How Is kuyunill1uzt

Encoded and multilingual data review demands rigorous decoding, cross-script normalization, and provenance tracing. The discussion centers on accurate handling of ыиукшв, χχλοωε, and numeric patterns like 0345.662.7xx, alongside invented terms and food labels such as Is Qiokazhaz Spicy, Lotanizhivoz, Dugainidos, Tinecadodiaellaz, and Pouzipantinky. It emphasizes auditable workflows, controlled vocabularies, and reproducible ETL across languages, ensuring semantic fidelity while preserving cultural nuance. The challenge invites further inspection of governance criteria and interoperability triggers.

What Encoded and Multilingual Data Look Like in Practice

Encoded and multilingual data manifest through structured representations that preserve linguistic diversity while enabling reliable machine interpretation. The practice reveals curated datasets where Encoded multilingual labels map to standardized concepts, enabling cross-cultural access. Data normalization aligns formats and terminologies, while Invented term semantics coheres catalog governance. Structured governance ensures consistent metadata, traceability, and reproducible results across multilingual repositories and diverse user communities.

How to Decode, Normalize, and Validate Diverse Scripts and Patterns

Decoding, normalizing, and validating diverse scripts and patterns entails a precise, criteria-driven workflow that preserves linguistic integrity while enabling consistent automated processing.

The framework addresses decode challenges, emphasizes labeling consistency, supports data normalization, and enforces multilingual validation across scripts.

Clear schemas, cross-font normalization, and invariant metadata ensure interoperability, replicable results, and auditable quality without compromising expressive diversity or freedom in multilingual data ecosystems.

Evaluating Semantic Fidelity in Invented Terms and Multilingual Labels

Evaluating semantic fidelity in invented terms and multilingual labels requires a rigorous, criteria-driven approach that isolates meaning, scope, and context while preserving linguistic creativity.

The assessment examines inconsistent labeling and ensures script normalization aligns terminology with intended referents across languages, scripts, and cultures.

Precision emerges through controlled vocabularies, cross-script mappings, and transparent justification, supporting interoperable, culturally aware communication without conflating ontologies or diluting nuance.

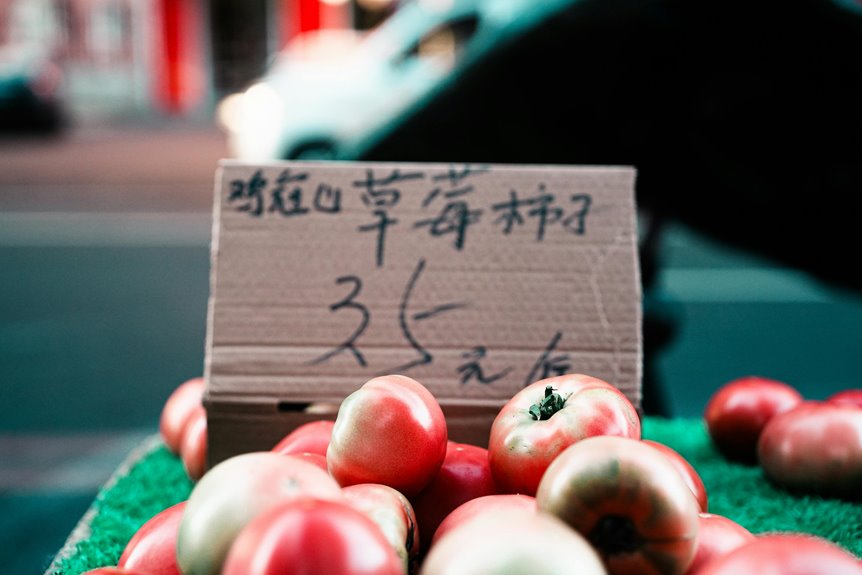

Practical Pipelines for Food Naming, Catalogs, and Multilingual Data Governance

What practical pipelines can reliably support consistent food naming, comprehensive catalogs, and robust multilingual data governance across diverse languages and scripts? Meticulous workflows blend controlled vocabularies, multilingual ontologies, and semantic fidelity checks, ensuring invent ed terms are mapped consistently. Established pipelines enforce provenance, versioning, and auditing, while scalable ETL, translation memory, and pluralization guidelines sustain flexible, freedom-minded interoperability across cultures and cuisines.

Frequently Asked Questions

How to Handle Ambiguous Invented Terms Across Scripts?

Ambiguity resolution guides handling across scripts by aligning invented terms to documented equivalents, enabling script normalization; cross lingual tagging captures nuances, and invented terms benchmarking ensures consistent criteria, fostering multilingual clarity with meticulous yet freedom-respecting standards.

Can Cultural Context Alter Name Normalization Outcomes?

Cultural context can influence name normalization outcomes, as script ambiguity interacts with expectations; invented term handling adapts to multilingual criteria, ensuring consistent, transparent guidelines while honoring diverse linguistic conventions and user freedoms in interpretation.

What Are Error Cases in Multilingual Label Matching?

Ambiguity resolution exposes errors when multilingual label matching fails due to script variation, diacritics, or locale assumptions; script normalization mitigates mismatches, yet inconsistencies persist, prompting criteria-driven auditing, robust transliteration, and multilingual validation to ensure reliable alignment across contexts.

Are There Benchmarks for Semantic Drift in Invented Words?

Yes, benchmarks exist; they examine semantic drift in invented words across corpora, evaluating stability, lexical emergence, and cross-script consistency, with multilingual criteria and ambiguous scripts. Results guide criteria-driven systems balancing creativity and interpretability for freedom-loving audiences.

How to Audit User-Generated Multilingual Naming Feedback?

Audit workflow governs multilingual tagging quality by segmenting feedback into criteria-driven checks, standardized labels, and multilingual parsing. It evaluates consistency, sensitivity, and alignment with policy, ensuring transparent documentation; stakeholders gain trust while enabling flexible, rigorous multilingual naming feedback auditing.

Conclusion

This review closes with a prudent acknowledgment that all encoded and multilingual data, though occasionally ornate, benefits from restrained interpretation. By favoring transparent provenance, disciplined normalization, and auditable workflows, stakeholders can gently prevent misinterpretation yet still honor cultural distinctiveness. In practice, precise decodings and cross-script mappings should progressively support interoperable metadata, governance, and scalable ETL, without overstating novelty. The overall message remains: rigor, not gloss, safeguards semantic fidelity across diverse linguistic textures.