Validate Incoming Call Data for Accuracy – 4699838768, 3509811622, 9108065878, 920577469, 3761752716, 4123879299, 2129919991, 5034367335, 2484556960, 9069840117

The discussion centers on validating incoming call data for accuracy, applied to the listed numbers. It emphasizes strict format checks, normalization to a uniform international standard, and deduplication across sources. The approach weighs geo, carrier, and risk signals, while flagging anomalies for audit and human review. Procedures are described as methodical and skeptical, designed to prevent drift and governance gaps. A persistent question remains: can the proposed controls reliably expose conflicts and dubious entries without compromising throughput?

What “Validating Incoming Call Data” Means in Practice

Validating incoming call data in practice begins with a clear definition of what constitutes “valid.” This entails specifying the data elements required for a call record, the acceptable formats for each element, and the acceptable ranges or enumerations for values such as timestamps, caller identifiers, and call outcomes. It highlights invalid data and supports privacy compliance through rigorous checks. Skeptical, methodical, freedom-minded.

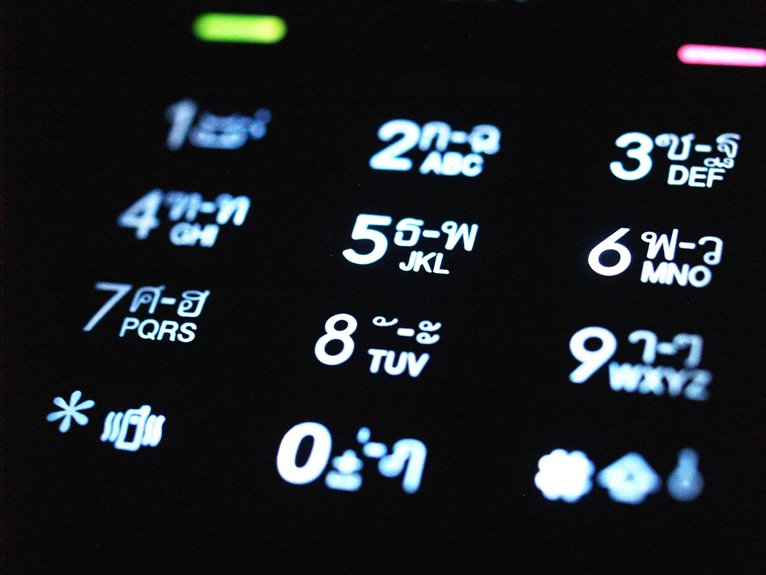

Start With Clean Data: Capture, Normalize, and Deduplicate Numbers

Start with clean data by first capturing numbers exactly as received, then applying consistent normalization rules to convert them into a uniform format, and finally performing deduplication to remove duplicates and resolve conflicts.

The process emphasizes capture accuracy and disciplined validation, applying deduplication strategies to reveal true, unique numbers while preventing false positives and silent merges within datasets.

Skeptical, methodical, and precise.

Techniques to Verify Caller Details: Format Validation, Geo and Carrier Checks, and Risk Signals

Techniques to verify caller details employ a disciplined, evidence-driven approach that combines format validation, geo and carrier checks, and risk signaling. The method remains skeptical and precise, rejecting vague assurances. It assesses invalid formats and flags duplicate records, ensuring consistency across sources. Geo disparities, carrier mismatches, and risk signals are weighed with reproducible criteria, fostering reliable, auditable judgments rather than assumptions.

Automating Anomaly Detection and Remediation to Keep Data Accurate

Could automation reliably catch all anomalies, or will certain deviations persist despite system rules? Automated anomaly detection operates on predefined patterns, continuously updating models to flag suspect records. Remediation pipelines must balance speed and accuracy, avoiding overcorrection.

The emphasis remains on validated data and clear anomaly patterns, while human review sustains accountability, preventing drift and ensuring sustainable data accuracy. Freedom-friendly governance underpins disciplined automation.

Conclusion

Conclusion:

In sum, the process of validating incoming call data is a disciplined, data-centric discipline—each candidate is interrogated for format, normalization, and deduplication before cross-checking geo, carrier, and risk signals. Anomalies are flagged, conflicts resolved, and auditable human review remains a non-negotiable control. The approach acts like a tight-lipped auditor, methodically exposing drift and governance gaps; a careful lighthouse, unwavering, guiding data integrity through fog toward a stable, auditable truth.